This article will be published soon

Scheduled for: 2026-05-10 01:00

The article will appear automatically when the time arrives — no need to refresh.

The Battle of Open-Weight Language Models

The year 2026 has witnessed a qualitative leap in the world of Large Language Models (LLMs), with open-weight models approaching the performance of major commercial models. According to SitePoint analysis, the quality gap on benchmarks like MMLU-Pro no longer exceeds 3-5% between the best open-weight models and leading commercial models.

Leading Open-Weight Models in 2026

Meta Llama 4

Meta released the Llama 4 series in April 2026 with two main variants:

| Model | Active Parameters | Total Parameters | Context Window |

|---|---|---|---|

| Llama 4 Scout | 17B | 17B | 10 million tokens (standard) |

| Llama 4 Maverick | 17B | 400B (128 experts) | 512K tokens |

Key Features:

- Natively multimodal (text + image)

- Supports 200 languages in training, with 12 fine-tuned languages including Arabic

- Outperforms GPT-4o and Gemini 2.0 Flash on standard benchmarks

- Community license allowing commercial use with restrictions for users over 700 million monthly active users

Mistral Large 2

The French open-weight model that competes strongly:

- 128K token context window

- MMLU: 84%

- API price: $3 (input) / $9 (output) per million tokens

- 33% cheaper than Claude Sonnet 4.6

- Excellent performance in coding, reasoning, and function calling

Qwen 3 & Qwen 3.5 (Alibaba)

Alibaba’s latest AI releases:

| Model | Key Features |

|---|---|

| Qwen 3 | Supports 119 languages, including Arabic |

| Qwen 3.5 | Supports 201 languages, Apache 2.0 license, multimodal |

- Available in various sizes up to 235B parameters with Mixture-of-Experts architecture

- Lightweight enough to run locally on powerful workstations

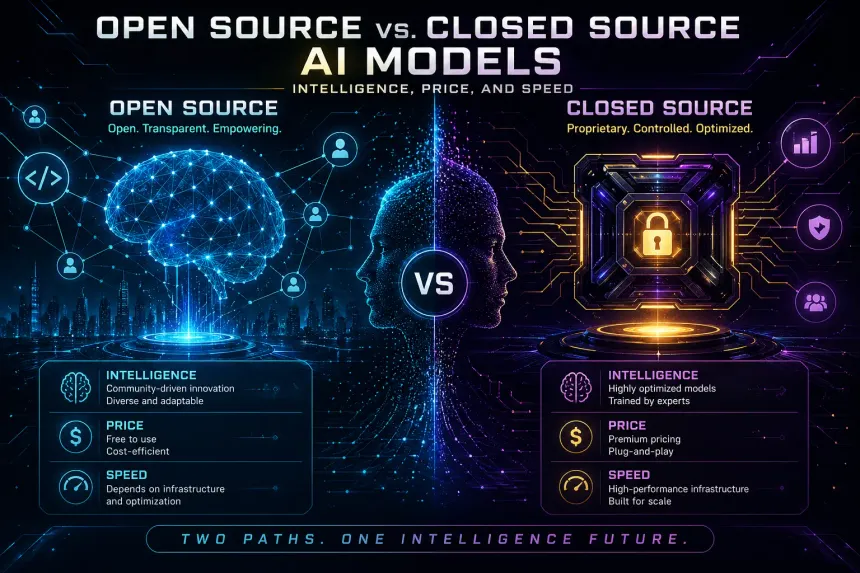

Comparative Analysis: Open-Weight vs Commercial

According to SitePoint, the comparison in 2026:

| Dimension | Open-Weight | Commercial (API) |

|---|---|---|

| Cost at scale (50M+ tokens/day) | 40-60% lower | Higher per-token fees |

| Privacy | Full control, local deployment | Third-party data transfer |

| Quality | 3-5% behind leading models | Best for complex reasoning |

| Operational overhead | 0.5-1.0 DevOps engineer | Minimal |

| Break-even point (savings kick in) | Above 10-30M tokens/day | Below 10M tokens/day |

The cost of hosting a model like Llama 4 on dedicated GPU hardware (e.g., a 1-year H100 contract) ranges between $0.20 to $1.00 per million tokens, depending on server, volume, and compression.

Remaining Challenges

- Not fully “open source”: Most of these models are only “open-weight”; training data and full training code are not always available

- Power consumption: Running a 70B model requires about 140GB of VRAM (two A100s) at FP16 precision, though compression reduces requirements

- Arabic integration: Llama 4 and Qwen support Arabic, but performance may vary by domain

- Geographic restrictions: Llama 4 usage is restricted in the European Union per Meta’s policy

Summary

Open-weight models are no longer just academic alternatives — in 2026, they are a strategic choice for businesses and developers. The gap with GPT-4o and Claude is narrowing rapidly, with significantly lower cost at scale.

For the latest models and updates:

Quick cost comparison (approximate API, 1M input + 1M output tokens):

| Model | Price (per 1M mixed tokens) |

|---|---|

| Llama 4 (self-hosted) | ~$0.50 |

| GPT-4o | $12.50 ($2.5 input + $10 output) |

| Claude Sonnet 4.6 | $18 ($3 + $15) |

| Mistral Large 2 (API) | $12 ($3 + $9) |

| Gemini 2.0 Pro | ~$6.25 ($1.25 + $5) |

With rapid ongoing development, attention turns to whether open-source models will be able to close the gap completely in the coming years.